SONiC at the Edge: Cheaper Switches Don’t Mean a Cheaper Network

Table of Contents

Disclosure: I currently work on a team deploying SONiC at scale. I’m not a neutral party, but I’ll be honest about both sides of the ledger.

In Part 1, I covered the technical shift: 1G access-layer hardware now runs SONiC, the feature set is filling in, and the support ecosystem is real. If you haven’t read that yet, start there.

This post is about the question that comes after “can we?” — the “should we?” That answer has less to do with switch specs and more to do with how your organization spends money, builds teams, and tolerates risk.

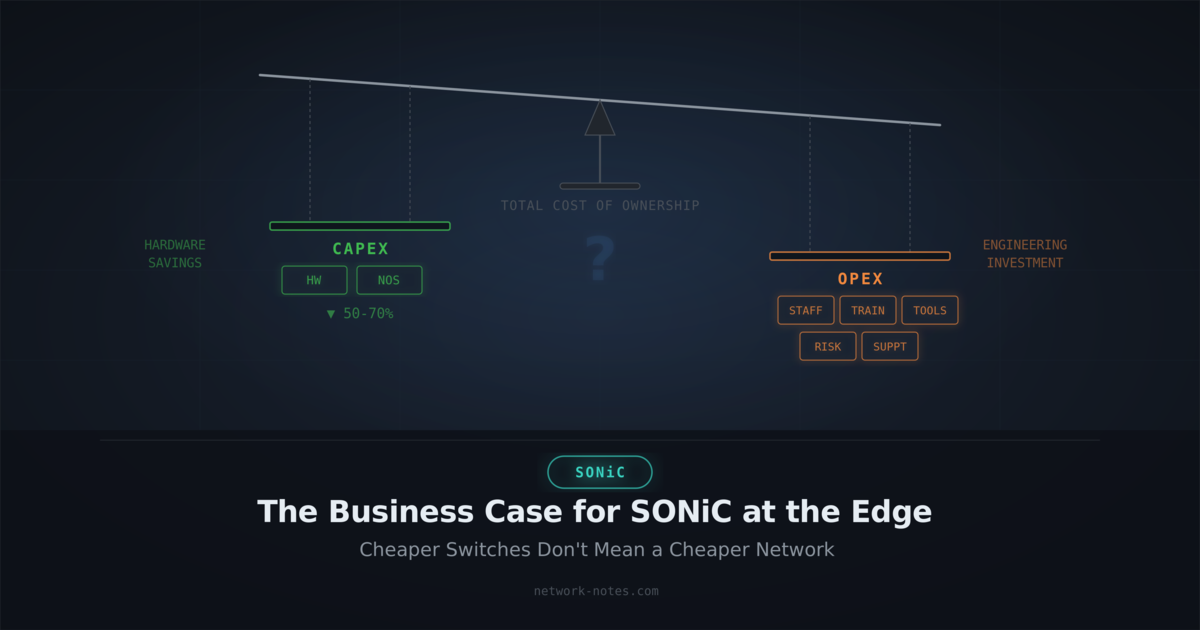

The Capex-Only Trap

Everyone leads with hardware savings when pitching disaggregated networking. And the savings are real. White box switches commonly cost one-half to one-third what a comparable Cisco Catalyst or Arista switch runs, based on list-price comparisons across current 48-port 1G PoE models. At fleet scale, that delta gets attention from finance teams fast.

But hardware is the easiest line item to compare and often the smallest. One TCO analysis of a 15,000-device deployment found that purchase price represented only 25–30% of five-year costs, with the remaining 70–75% coming from deployment, management, support, and retirement. Network infrastructure tends to follow a similar pattern, though the exact ratio varies by environment. One analysis on the Network Automation Forum captured this well: an engineer cited potential savings of $1.3 million on a single edge upgrade, while others in the same discussion pointed out that compensating costs in staffing and operational complexity can eat into those numbers significantly.

Here’s what actually costs money over five years:

- Staffing. Someone has to operate this network. SONiC is Linux. Your team needs to be comfortable with Debian, systemd, Docker containers, and debugging at the OS level, not just CLI-driven NOS administration. If your current team can’t do that, you’re either retraining them or hiring people who can. Both cost money.

- Training. Retraining a team that’s spent a decade on IOS or EOS is a real investment. It’s not a week-long bootcamp. It’s months of building muscle memory on a fundamentally different operational model.

- Tooling and management plane. Your existing monitoring, config management, and automation probably assumes a traditional NOS. Some of it ports over. Some of it gets rebuilt. That rebuild has a cost in engineering hours and in the operational risk of running new tooling in production. The bigger gap: SONiC doesn’t ship with a CloudVision or DNA Center equivalent. There’s no turnkey management plane for ZTP, firmware lifecycle, config compliance, or centralized visibility. Organizations with the scale to justify SONiC have often already built internal tooling for these functions and aren’t relying on vendor-provided platforms, which lowers this cost significantly. But if your operations today depend on a vendor management suite, budget for the engineering effort to replace it.

- Wireless and adjacent systems. SONiC is a wired switching NOS. You’ll need a separate wireless management stack, which adds another operational surface and cost line. Factor in the integration work between your wired and wireless management planes. It won’t be as seamless as a single-vendor converged solution.

- Support contracts. Commercial SONiC distributions aren’t free. Broadcom Enterprise SONiC, Dell Enterprise SONiC, Aviz Certified Community SONiC. They all come with support contracts. Cheaper than a Cisco SmartNet? Usually. Free? No.

- Incident response. Your mean time to resolution will be longer on a new platform. That’s not a knock on SONiC. It’s true of any platform your team hasn’t operated for years. It’s especially true at the access layer, where the SONiC ecosystem is younger: Marvell Prestera SAI drivers, 1G hardware platforms, and features like PVST+ and 802.1X are all more recent and less battle-tested than their data center counterparts. Budget for it.

The BOM comparison is where the conversation starts. It shouldn’t be where it ends.

What You’re Actually Trading

The pitch for disaggregated networking is “escape vendor lock-in.” The reality is messier: you’re trading one type of dependency for another.

With a traditional vendor, the deal is straightforward. You write a check for hardware, software, and support bundled together. You get a single number to call when things break. You’re locked to their roadmap, their pricing, and their release cadence, but you’re also insulated from a lot of operational complexity. That insulation has value, and it’s easy to underestimate until it’s gone.

With SONiC and disaggregated hardware, you get procurement flexibility. You can source switches from Celestica this quarter and Edgecore next quarter. You’re not captive to one vendor’s pricing. But you take on the integration work. You own the testing matrix — every NOS version against every hardware platform against every feature combination you deploy, a validation effort that traditional vendors absorb for you. You’re responsible for validating that your NOS version works on your hardware with your feature set. That responsibility lands on your engineering team, and it doesn’t go away after the initial deployment.

The same principle applies to network disaggregation: focus on switching costs, not lock-in. Every platform has lock-in of some kind. The question is what it costs you to change. With Cisco, the switching cost is a hardware refresh and a config migration. With SONiC, the switching cost is lower on hardware but potentially higher on the operational and tooling side if you’ve built deep integrations.

There’s an upside to the trade that’s easy to overlook: with an open-source NOS, you can contribute upstream, influence the roadmap, and fix bugs yourself (or fund someone to). You’re not waiting on a vendor’s release train for a feature you need next quarter. That agency has real value for organizations with the engineering capacity to exercise it.

The honest framing: you’re not eliminating dependency. You’re moving it from a vendor relationship to an internal engineering capability. Whether that’s a good trade depends entirely on your organization.

The Scale Threshold

The business case for SONiC at the access layer is scale-dependent. This isn’t a controversial statement — it’s just math.

At small scale — say, under 100 switches — the engineering investment dominates. You’re hiring or retraining people, building tooling, standing up a lab, and absorbing the risk of a new platform. The hardware savings on 100 switches don’t cover that. Buy Meraki or Aruba, spend your engineering time on something else.

At medium scale — a few hundred switches across a handful of sites — it gets interesting but not obvious. The hardware savings start to add up, but you’re still carrying the full weight of the operational investment. This is the zone where the decision depends heavily on your team’s existing capabilities. If you already have strong automation practices and Linux-comfortable engineers, the marginal cost of adding SONiC is lower. If you’re starting from scratch on both, it’s a harder sell.

At large scale — thousands of switches across dozens or hundreds of sites — the math starts to favor SONiC, but only if the organizational readiness factors line up. The per-unit hardware savings compound across the fleet and across refresh cycles. The operational consistency argument kicks in: one NOS from spine to access port means one automation framework, one monitoring pipeline, one set of runbooks. And the negotiating power is real. When you’re buying thousands of switches, being able to source from multiple hardware vendors changes the dynamic. Scale is necessary for the business case to work, but it’s not sufficient on its own.

One thing the scale discussion often misses: “do nothing” isn’t free either. Vendor price increases compound — a 3–4% annual hardware uplift on a 2,000-switch fleet adds up over two refresh cycles. Lock-in deepens as you build more automation and tooling around a proprietary platform, raising your switching costs every year you stay. And the talent pool for legacy NOS administration is gradually shrinking as the industry shifts toward Linux-native infrastructure. The status quo has a cost trajectory too. Make sure your business case accounts for it.

The variables that determine where your break-even sits:

- Fleet size. More switches = more capex savings to offset the opex investment.

- Number of sites. More sites amplify both the savings (more hardware) and the complexity (more operational surface area).

- Team maturity. A team that already runs infrastructure as code has a shorter ramp than one that manages everything by hand.

- Contract timing. If you’re mid-cycle on a vendor contract with favorable terms, the urgency drops. If you’re facing a refresh with a steep price increase, the urgency spikes.

- Greenfield vs. brownfield. New sites are easier. Migrating existing sites means running two platforms in parallel during the transition, which has its own cost.

Organizational Readiness

Before you evaluate hardware or run a PoC, ask these questions about your organization:

Does your team manage infrastructure as code today? If your network engineers are already writing Ansible playbooks, using Git, and deploying through CI/CD pipelines, the transition to SONiC is a platform change, not a paradigm change. If your workflow is “SSH into the switch and type commands,” you have a culture shift ahead of you that’s bigger than the technology shift.

Can your network engineers troubleshoot at the Linux OS level? SONiC problems don’t always look like network problems. Sometimes it’s a container that didn’t start. Sometimes it’s a systemd service in a failed state. Sometimes it’s a kernel driver issue. Your team needs to be as comfortable with journalctl and docker logs as they are with show ip bgp.

Can you recruit Linux-native network engineers? This talent pool is growing, but it’s still smaller than the pool of traditional network engineers. An EMA survey on network automation found that 27% of respondents pointed to staffing issues and skills gaps as a top challenge, with one engineer noting: “The most challenging thing for me is the lack of network engineers who can contribute to automation. The community is small, and it’s hard to find people who can help you solve a problem.” If you’re in a market where hiring is already hard, factor in the recruiting challenge. If you’re in a tech hub where Linux skills are common, this is less of a concern.

Is your organization comfortable with open-source support models? Commercial SONiC distributions come with support contracts, but the support experience is different from calling Cisco TAC. Community SONiC means GitHub issues and mailing lists. Know which model your organization can tolerate.

Do you have the patience for a multi-year build? This isn’t a forklift upgrade you execute in a quarter. It’s a capability you build over years: lab, pilot, limited production, broad rollout. A realistic timeline, in my experience: 6–12 months to stand up a lab and run a pilot, another 6–12 months for limited production at a handful of sites, and 1–2 years to reach broad rollout. Most organizations won’t see net positive ROI until year two or three, when the hardware savings across refresh cycles start outpacing the cumulative engineering investment. If your leadership expects payback in the first year, set that expectation early. Or find a different project.

If you answered “no” to most of these, SONiC isn’t necessarily the wrong choice. But your business case needs to include the cost of getting to “yes” on each one.

Building the Business Case

A credible business case has two parts: the spreadsheet and the narrative. You need both.

The spreadsheet covers what you can quantify:

- Hardware cost delta: per-unit savings × fleet size × number of refresh cycles in your planning horizon

- Support contract delta: commercial SONiC distribution costs vs. current vendor support

- Staffing costs: new hires, training programs, potential salary premium for Linux networking skills

- Tooling costs: what you build or buy to replace vendor-provided management platforms

- Transition costs: lab buildout, pilot program, migration execution, parallel operation during cutover

To make this concrete: imagine a 1,000-switch fleet where white box hardware saves $2,000 per unit (a conservative estimate — Part 1 puts the delta at one-half to one-third of list price). Over two refresh cycles (roughly ten years), the capex delta is $4M. If your engineering investment is $1.5M in year one (hiring, lab, tooling) and $500K/year ongoing, you break even midway through year two. That math looks good on a slide. But if you also need to rebuild your management plane, add $500K–$1M to year one. If your team needs 12 months of ramp time before they’re productive on SONiC, push the break-even out another year. The point isn’t the specific numbers — it’s that the spreadsheet has to include all the lines, not just the hardware line.

The narrative covers what you can’t easily put in a cell:

- Procurement leverage — your incumbent vendors won’t stand still. They’re actively raising prices; Cisco implemented an average ~3.4% hardware uplift in late 2025, with similar increases for technical services. Walking into a renewal with a credible SONiC PoC in your back pocket will sharpen their pencil, and that leverage is valuable even if you never deploy a single white box switch. But the reverse is also true: if your business case depends on a specific capex delta, aggressive incumbent discounting can erode it. Build your case on the operational and strategic benefits, not just the BOM savings, because the BOM savings are the part your current vendor can most easily match

- Operational consistency — one NOS across your infrastructure reduces the cognitive load on your team and simplifies automation

- Talent pipeline — you’re hiring from the Linux and DevOps talent pool, not just the “CCIE or equivalent” pool

- Future optionality — you’re not waiting on one vendor’s roadmap for features you need. If the community or a different distro ships it first, you can adopt it

- Ecosystem fragmentation risk — multiple commercial SONiC distributions (Broadcom, Dell, Aviz, Asterfusion) have different feature sets, release cadences, and proprietary extensions. Evaluate portability between distros, not just between SONiC and traditional vendors. If you build deep integrations against one distro’s proprietary MCLAG implementation, you’ve traded one form of vendor lock-in for another

Don’t oversell the savings. A business case that honestly acknowledges the tradeoffs (“we’ll save X on hardware but invest Y in engineering capability”) is more credible than one that only shows the capex delta. Decision-makers who’ve been around long enough have seen the “this will save us millions” slide deck before. They’re looking for the slide that says “here’s what could go wrong and here’s how we’ve accounted for it.”

What’s Next

This post covers whether the business case works. It doesn’t cover how you execute. If there’s interest, Part 3 will get into the operational details:

- Commercial SONiC support vs. Cisco TAC in practice. Response times, escalation paths, bug fix turnaround. What “different” actually looks like when you’re troubleshooting a production outage at 2 AM.

- Brownfield migration strategies. How do you actually migrate a live campus network from IOS to SONiC? Site-by-site? Building-by-building? How long do you run dual-stack, and what does parallel operation cost?

- The testing and validation burden. What the NOS version × hardware platform × feature set matrix looks like in practice, and how to build a validation pipeline that doesn’t consume your entire engineering team.

My Take

My take: The technology is ready — Part 1 covered that. The business case is real at scale. But “at scale” is doing a lot of work in that sentence.

The organizations that will succeed with SONiC at the edge are the ones that treat it as an organizational capability investment, not a cost-cutting exercise. If the only line in your business case is “cheaper switches,” you’re setting yourself up for a painful surprise when the opex bill comes due.

The right framing: SONiC at the edge is a bet on your team’s ability to operate open infrastructure. The hardware savings fund that bet. The payoff is operational flexibility and procurement leverage that compounds over time — but only if you invest in the team and tooling to realize it.

If your organization has the scale, the engineering maturity, and the patience for a multi-year build, the math works. If you’re looking for a quick win on next quarter’s budget, buy Meraki.